OpenAI Launches ChatGPT for Clinicians

On April 22, 2026, OpenAI announced the launch of ChatGPT for Clinicians, a version designed to support clinical tasks such as documentation and medical research, allowing clinicians to focus on providing high-quality patient care. This service is available for free to all certified doctors, nurse practitioners, physician assistants, and pharmacists in the United States.

According to the official announcement, the U.S. healthcare system is currently under immense pressure. Clinicians are required to manage increasing administrative demands while caring for more patients. Many have begun to rely on AI tools like ChatGPT for support. Data from the American Medical Association in 2026 indicates that the use of AI by doctors has reached an all-time high, with 72% of physicians reporting they now use AI in clinical practice, up from 48% the previous year. Millions of clinicians worldwide use ChatGPT weekly to support their clinical care, applying it to nursing consultations, writing and documentation, and medical research. The proportion of clinicians using ChatGPT has more than doubled over the past year.

OpenAI’s team collaborated with hundreds of physician advisors to enhance the capabilities of ChatGPT for Clinicians, ensuring it supports critical clinical application scenarios.

Features of ChatGPT for Clinicians:

- Advanced AI Model for Complex Clinical Questions: Free access to cutting-edge medical models that help reliably address issues, research, and documentation.

- Skills for Reproducible Clinical Workflows: Transform common workflows into reusable skills, allowing ChatGPT to consistently follow the same steps for tasks like referral letters, prior authorizations, and patient instructions.

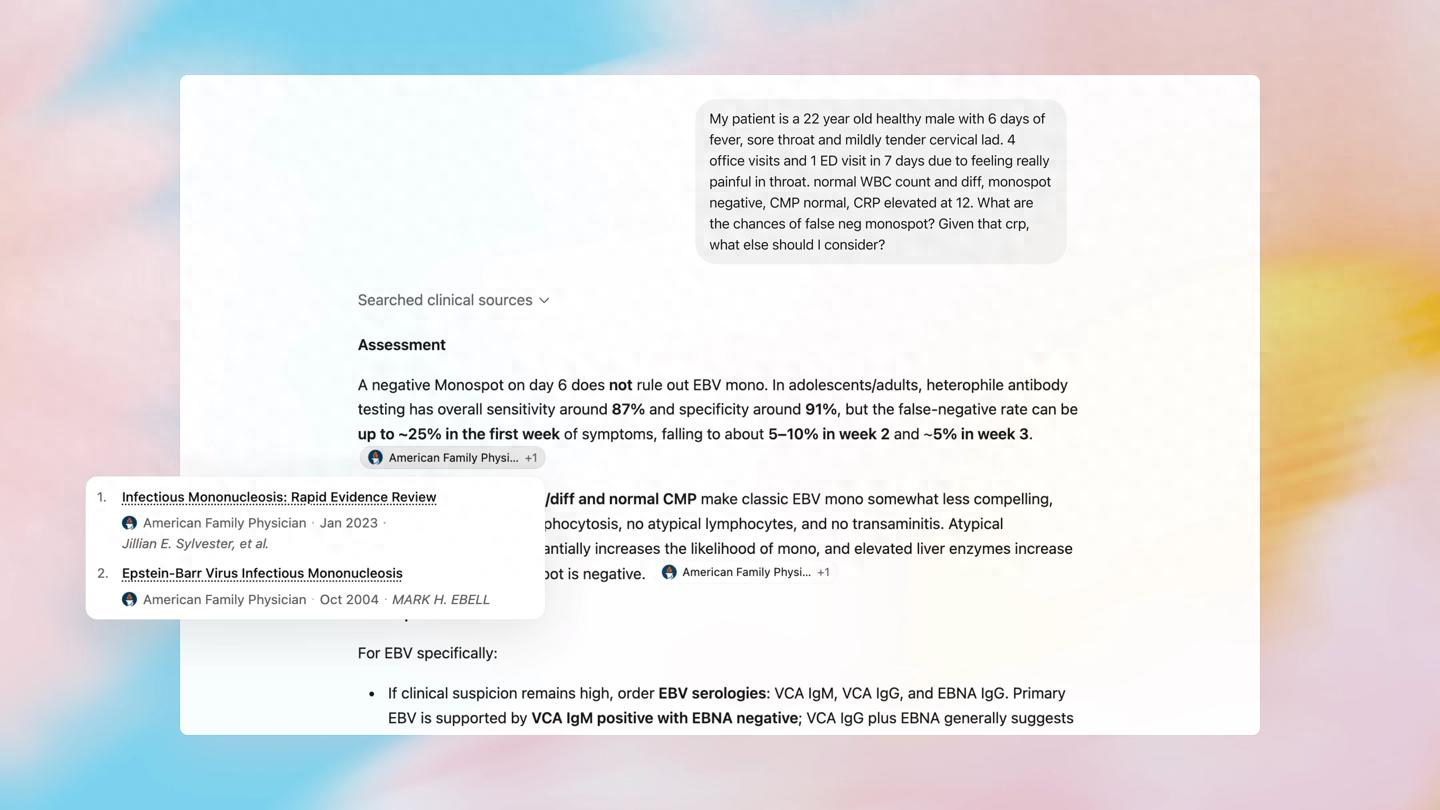

- Trusted Clinical Retrieval: Reasoning cases more quickly and confidently using real-time evidence from millions of authoritative, peer-reviewed medical sources.

- In-depth Research Across Medical Journals: Submit literature reviews to ChatGPT, select trusted sources, and guide research directions as needed, producing comprehensive and well-cited reports in minutes.

- CME from Real Clinical Questions: When researching clinical issues in ChatGPT, qualified evidence reviews can automatically count towards continuing medical education credits—no separate courses or additional documentation required.

- Optional HIPAA-Compliant Support: Many clinical tasks do not require PHI, but if needed, eligible accounts can obtain HIPAA support through a Business Associate Agreement (BAA).

- Account Security and Privacy: Conversations are not used to train models, and protective measures like multi-factor authentication help secure sensitive work.

OpenAI has been enhancing the safety and accuracy of ChatGPT’s responses in health scenarios. The physician advisors continuously review model responses and provide feedback on quality, reasoning, credibility, and safety. To date, they have reviewed over 700,000 model responses, reflecting how clinicians and patients use ChatGPT in the real world; a new model response is reviewed by a physician every few minutes. OpenAI’s models have been rated as the best-performing systems in actual medical applications in third-party evaluations.

This rigor has also influenced the development of ChatGPT for Clinicians. Before release, physician advisors tested 6,924 conversations in their daily work, covering clinical care, documentation, and research. Overall, physicians rated 99.6% of the responses as safe and accurate. In 355 cases, three independent physicians identified real citations, with ChatGPT referencing these sources more frequently than human doctors. Nevertheless, ChatGPT for Clinicians is designed to provide information support to clinicians, not to replace their judgment or expertise.

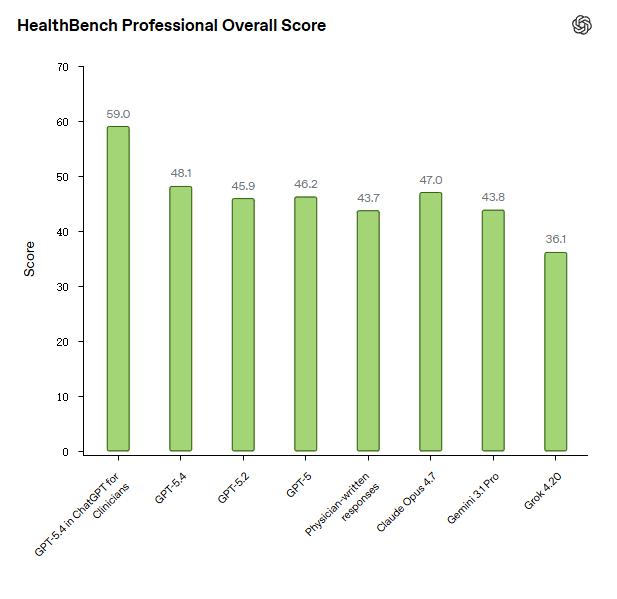

Today, OpenAI also introduced HealthBench Professional, an open benchmark for real clinical personnel chat tasks, covering three use cases: nursing consultation, writing and documentation, and medical research. Based on a broader evaluation of health dialogues by HealthBench, it utilizes physician-written dialogues and scoring criteria, multi-stage physician adjudication, and meticulous data filtering to measure performance and safety in common clinician chats.

HealthBench Professional examples were selected for their quality, representativeness, and difficulty to continuously measure progress. About one-third of the examples involve physicians intentionally “red teaming,” trying to identify issues in our models. In OpenAI’s selected dataset, the most challenging dialogues are 3.5 times harder than the model’s.

OpenAI reports results for clinicians and across models through ChatGPT. As a strong foundation, OpenAI requests that human doctors within their specialties have unlimited time and network access to generate answers themselves. OpenAI found that the GPT-5.4 in the ChatGPT for Clinicians workspace outperformed the base GPT-5.4, all other OpenAI and external models, and human doctors.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.