Introduction

When coding, AI assistants often either respond too slowly, interrupting your flow, or lack the intelligence to produce quality code. Cursor’s newly released Composer model breaks this dilemma by leveraging reinforcement learning (RL) technology to achieve a dual peak of intelligence and speed—boasting programming efficiency four times that of similarly intelligent models while accurately adapting to real codebase standards.

Have you ever wondered why AI programming assistants often feel “almost there”? They are either smart but frustratingly slow or quick but produce incorrect code. This contradiction has troubled me until I saw Cursor’s AI researcher Sasha Rush’s presentation at Ray Summit 2025. They introduced a new model called Cursor Composer, which solves this problem using a completely different approach: training an AI agent that is both smart and fast through reinforcement learning (RL).

After watching the presentation, I felt that this was not just a technical advancement but a shift in mindset. The Cursor team is not chasing universal benchmark scores but focusing on solving real-world programming problems. They use reinforcement learning to let the model learn in real codebase environments, understand coding standards, learn to use various tools, and know when to execute tasks in parallel. More importantly, they integrated the entire product infrastructure into the training process, allowing the AI to function like a real user using Cursor during training. This “training as product” philosophy made me rethink how AI tools should be constructed.

The Need for a Fast and Smart Programming AI

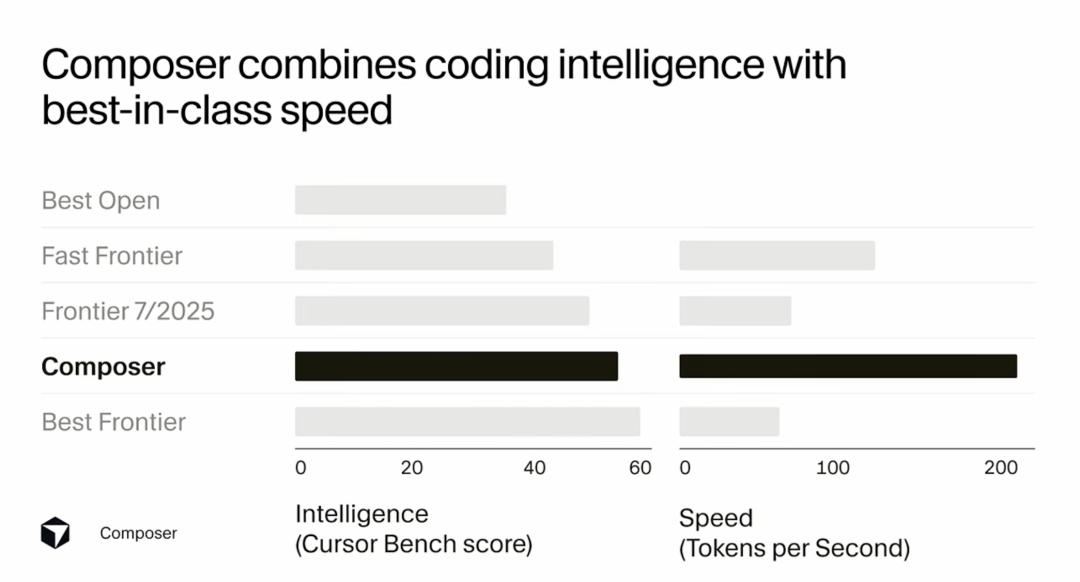

Sasha Rush mentioned at the beginning of the presentation that Cursor Composer performs nearly on par with the best Frontier models on their internal benchmarks and outperforms all models released last summer. Its performance is significantly better than the best open-source models and those marketed as “fast.” What is truly impressive is that this model’s token generation efficiency is four times that of similarly intelligent models. This means it is not only smart but astonishingly fast, even quicker than products specifically designed for rapid coding.

I have always believed that the “speed” of AI tools is not just a technical metric but the core of user experience. Imagine you are coding and suddenly need to refactor a complex function. If the AI assistant takes 30 seconds to provide a suggestion, that time is enough to disrupt your thought process and break your focus. However, if the AI can respond in 2 seconds, you can maintain the continuity of your thoughts and stay immersed in the flow of programming. This “speed that doesn’t interrupt your thoughts” experience is what truly adds value.

The Cursor team understands this deeply. Their inspiration came from one of the most popular features in the Cursor application: Cursor Tab. This is a fast, intelligent model that feels very smooth and enjoyable for users. Sasha Rush stated that making the model fast enough to support interactive use allows developers to maintain their thought chain and stay in a workflow state. They aimed to build an agent model that provides a similar experience. Thus, they created a prototype model, codenamed Cheetah, specifically for agentic coding to provide a fast experience. After releasing this prototype in the application, user feedback excited them, with many saying it felt “completely different,” even likening it to “alien technology.” This convinced them that if they could build a smarter model while maintaining the same efficiency, it would lead to a revolutionary experience.

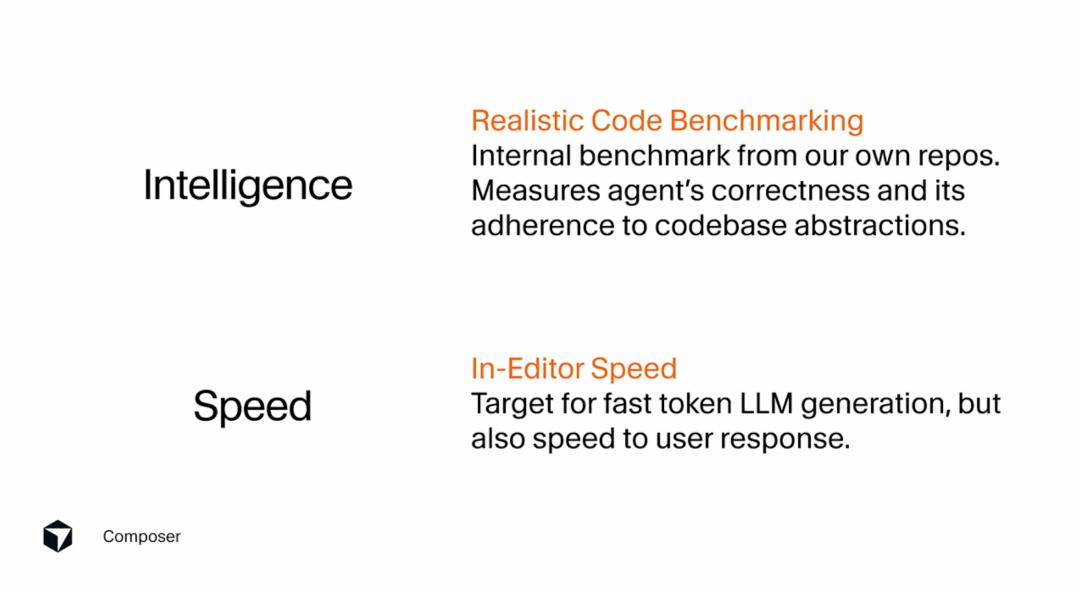

I particularly resonate with Sasha Rush’s point that they are not pursuing arbitrary benchmark scores but aim to build a model that feels good to use in real programming work. They constructed an internal benchmark from their own codebase to measure the model’s ability to work within large codebases and adhere to the codebase’s own standards and norms. These intelligent factors are what truly matter in everyday software engineering. Many times, AI models score high on standard tests but perform mediocrely in real work scenarios because they are not optimized for actual workflows.

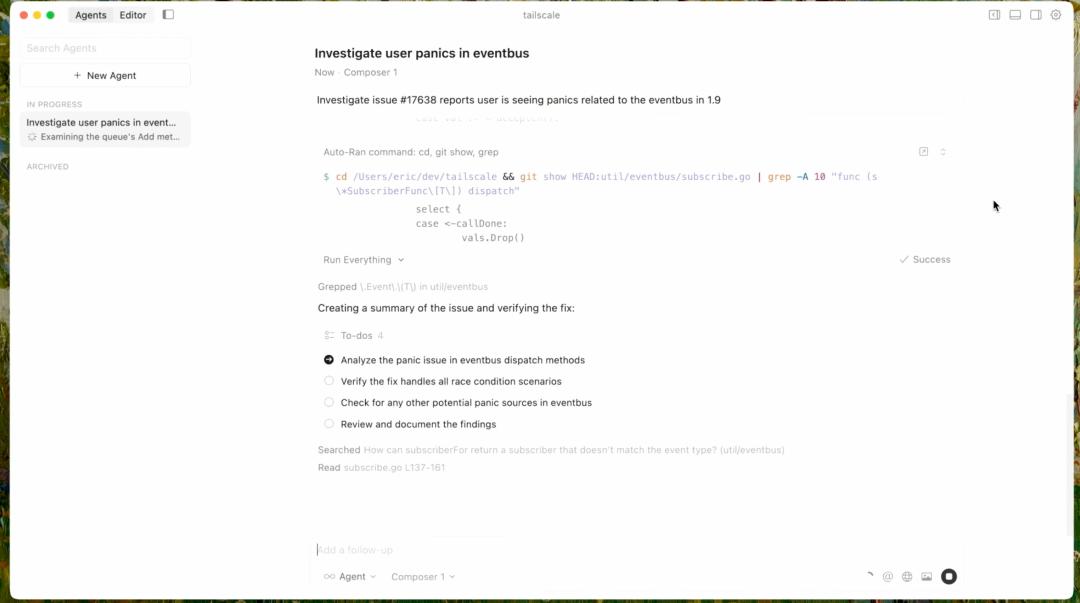

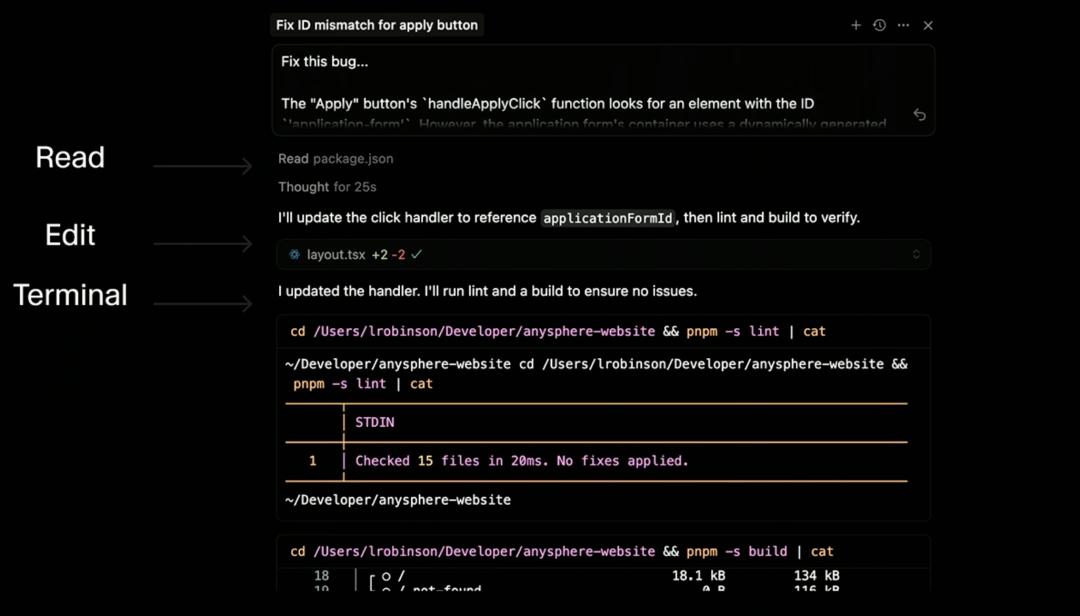

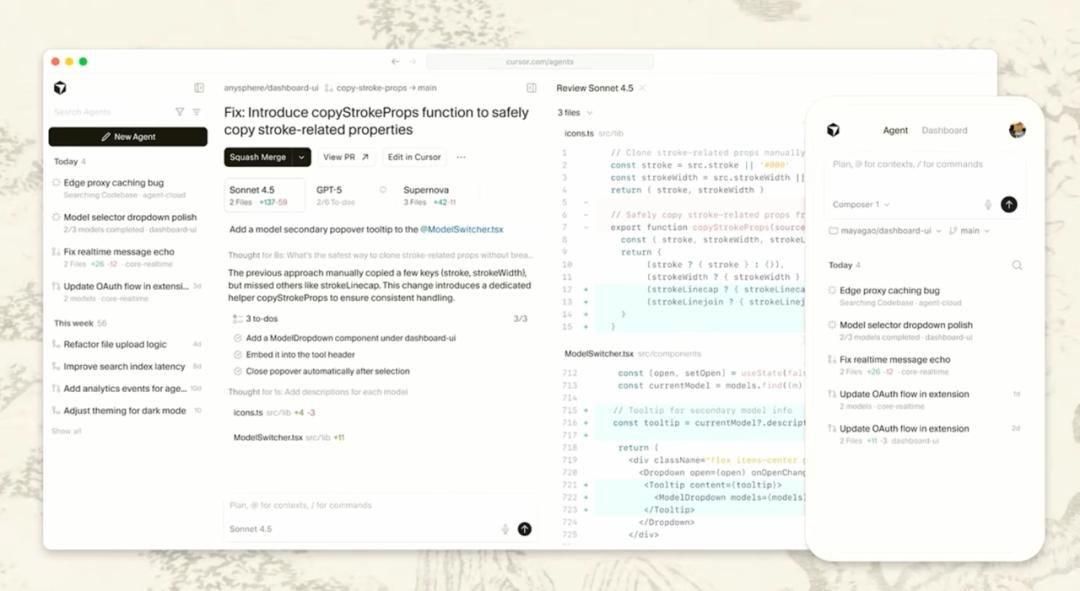

The Cursor team’s goals are dual: to be both intelligent and fast. “Fast” means not only efficiently generating tokens but also running very quickly in the editor. This requires the model to generate edits quickly and utilize techniques like parallel tool calling to produce results rapidly. When you combine these two goals, you get a model that feels entirely different in practice. In demonstration videos, users submit a query and immediately see the model calling multiple tools, running terminal commands, searching the codebase, making edits, and writing to-do lists, and just one or two seconds later, they receive a complete edit and summary of code changes. This experience is entirely different from typical editor agents used daily.

Agent RL: Making AI Work Like Real Developers

Sasha Rush spent considerable time explaining how they use agent RL (agent reinforcement learning) to train Composer. I found this part particularly enlightening as it reveals the mindset needed to build genuinely useful AI tools.

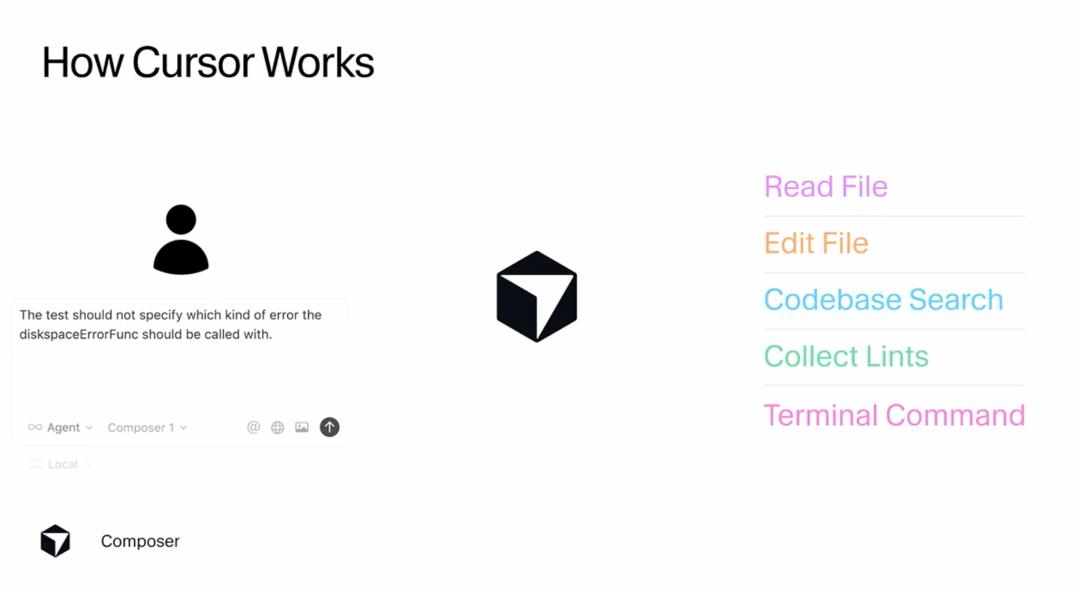

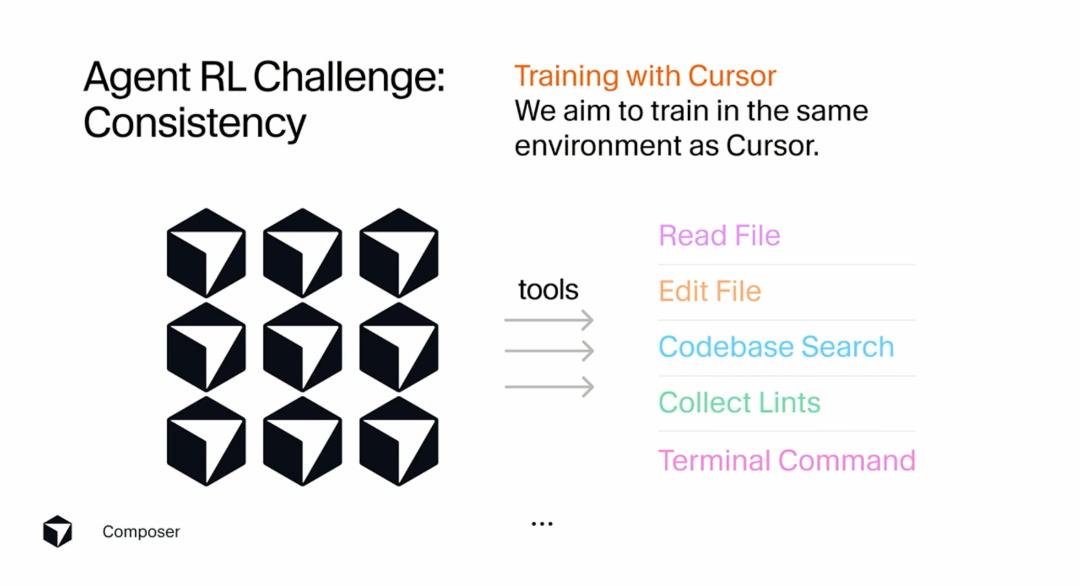

From the user’s perspective, the workflow with Cursor is straightforward: users submit a query to the Cursor backend, and the agent reads the query and performs a series of tool calls. Sasha Rush mentioned that we can primarily understand the agent as interacting in a “tool space.” It can choose from a variety of tools that can change the user’s code. In practice, Cursor uses about ten tools, but we can simplify this to include reading files, editing files, searching the codebase, collecting lints, and running terminal commands. The agent can call these tools either serially or in parallel if it believes that will yield good results.

At its core, this agent is still just a large language model, generating tokens. Some of these tokens can be understood as forming XML patterns, enabling it to call tools and their parameters. However, from a reinforcement learning perspective, we can mainly view it as taking actions in the combination space of tool calls. When you look at the Cursor frontend, the rollouts you see are the processes of combining different tool calls to make changes. For read operations, the frontend simply summarizes them; for edits, you see the entire change in real-time; for terminal calls, you see both the tool calls and the terminal’s output. This is essentially how the agent acts in your IDE world.

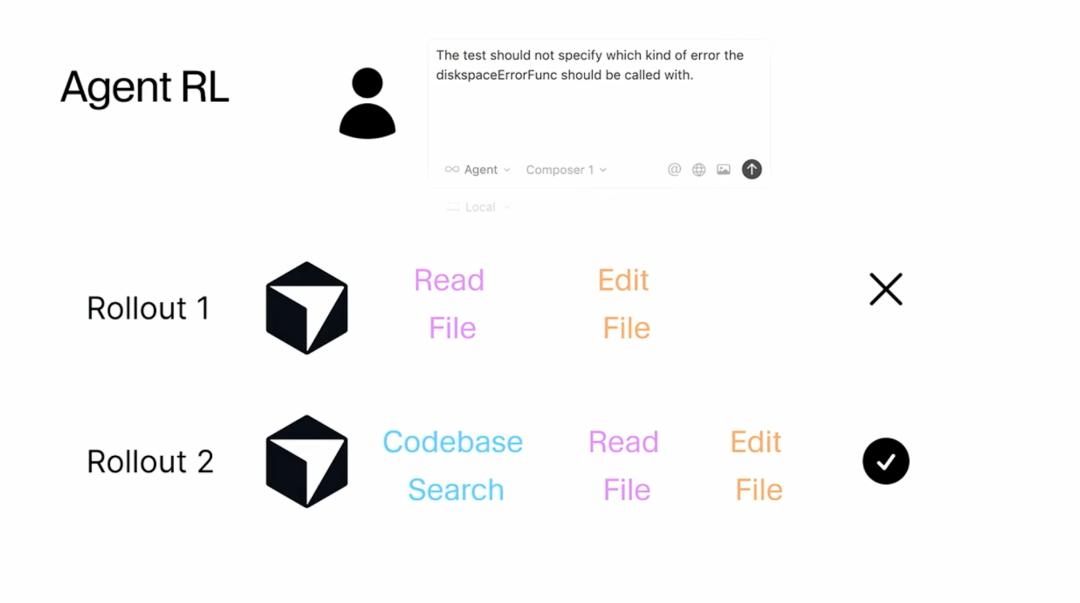

What I find most interesting is how they conduct reinforcement learning training. Sasha Rush emphasized that they try to simulate how Cursor operates in a production environment as closely as possible. This means they treat training data as user queries sent to the model, and then the agent calls a series of tools to attempt to achieve the goal. However, the difference with reinforcement learning is that they perform many different rollouts from the same starting point. You can think of this as running many instances of Cursor in parallel. In rollout 1, the model might read a file and then edit it. But in rollout 2, due to the probabilistic nature of LLMs, it might follow a different sequence of tools and take a different path. They then score the outputs of these two choices, determining that rollout 2 is better than rollout 1, and update the model parameters based on this change.

It sounds simple, right? But Sasha Rush noted that all the interesting challenges come from how to scale this basic process to the extreme, and each step of the scaling process presents challenges. This reminds me that often the core ideas of technology may be simple, but the real difficulty lies in executing them to the extreme and making them practically applicable.

Three Major Challenges: Matching Training and Inference, Long Rollouts, and Consistency

Sasha Rush elaborated on three core challenges encountered in this agent-style reinforcement learning. I find these challenges very representative, as they apply not only to programming AI but also to nearly all scenarios requiring AI agents to be trained in real environments.

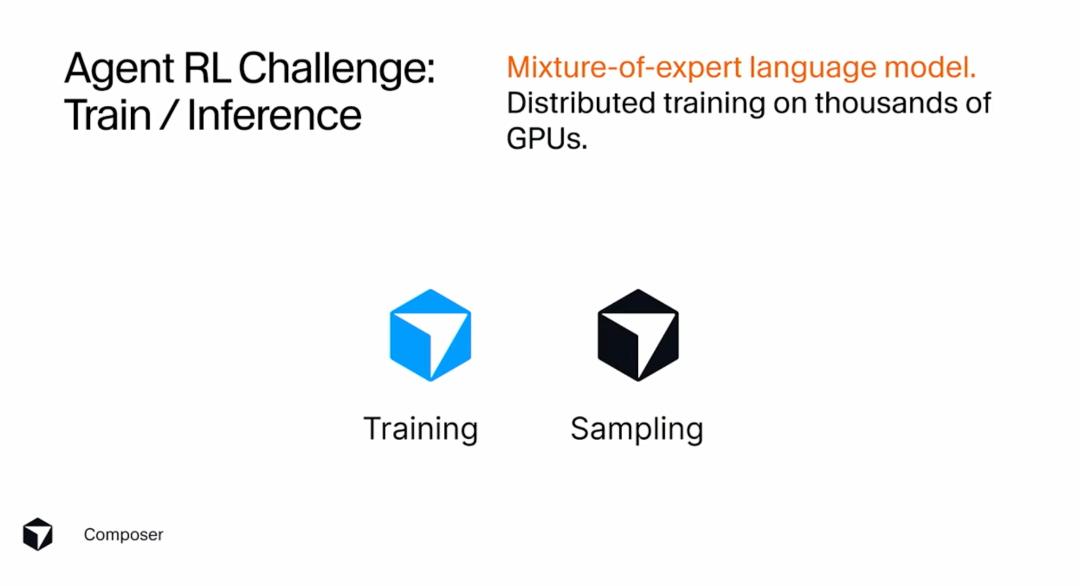

The first challenge is matching training and inference. They need to train a mixture of experts language model to achieve optimal parallel performance, which requires distributed training on thousands of GPUs. If you are only doing pre-training or supervised fine-tuning, that is already difficult enough, but when you do reinforcement learning, the difficulty doubles because you must have both training and sampling versions that must work in sync. I believe this challenge reveals a deeper issue: the model used in real products and the one used in training must maintain a high degree of consistency in architecture, behavior, and performance; otherwise, what is trained may not work at all in the product.

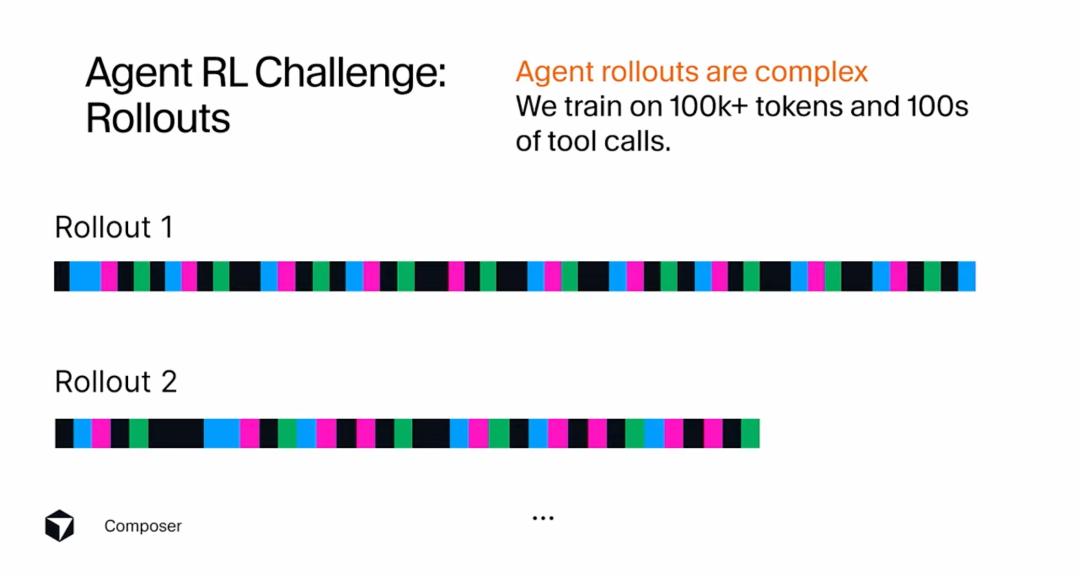

The second challenge is long rollouts. When they train with real code changes, rollouts are much more challenging than demonstrated. In modern models, rollouts use between 100,000 to 1,000,000 tokens and involve hundreds of different tool calls throughout the process. Complicating matters, different rollouts may produce varying numbers of tool calls, potentially requiring significantly different amounts of time. This makes me realize that real-world tasks are often much more complex than we imagine. A seemingly simple request like “refactor this function” might require the AI to read a dozen related files, search for usage examples in the codebase, run tests, check lints, and only then make the correct modifications. If training only uses simple toy examples, the model will never learn to handle this complexity.

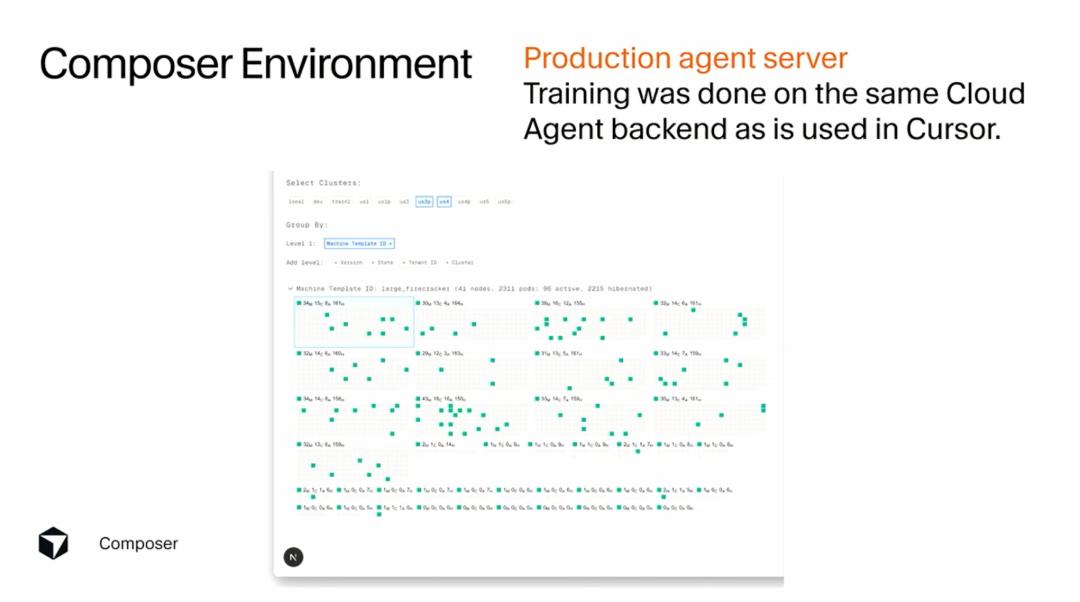

The third challenge is consistency. What they are doing is essentially “training through product production.” They have a Cursor agent and want to simulate it as closely as possible in reinforcement learning. This means they want to use the exact same tool formats and tool responses as in the production product but at a larger scale. This challenge is particularly interesting because it breaks the boundaries of traditional machine learning. Typically, we separate training environments from production environments, but the Cursor team chose to keep them as consistent as possible. The benefit of this approach is that every trick and tool usage method learned during training can be directly transferred to the real product.

Sasha Rush emphasized that these three issues reflect challenges in scaling machine learning systems, but the actual solutions to these challenges lie in infrastructure choices. I completely agree with this viewpoint. Many times we view machine learning as purely an algorithmic and mathematical problem, but in reality, whether an idea can be turned into a genuinely useful product often depends on how strong and flexible your infrastructure is.

Infrastructure: The Key to Making the Impossible Possible

Sasha Rush spent a lot of time explaining their infrastructure architecture, which I find very worthwhile to understand in depth, as it showcases what is needed to build truly scalable AI systems.

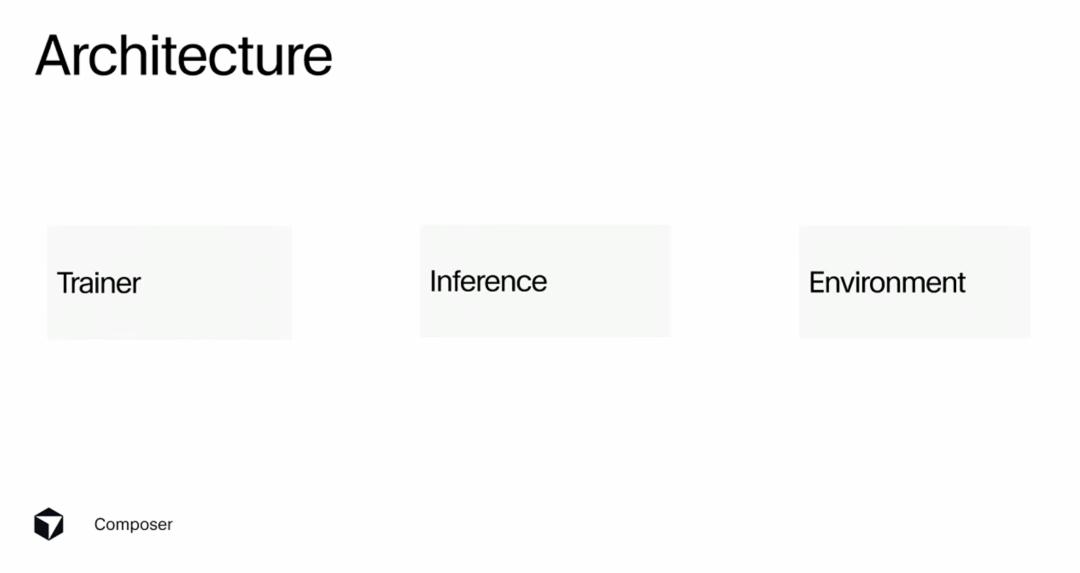

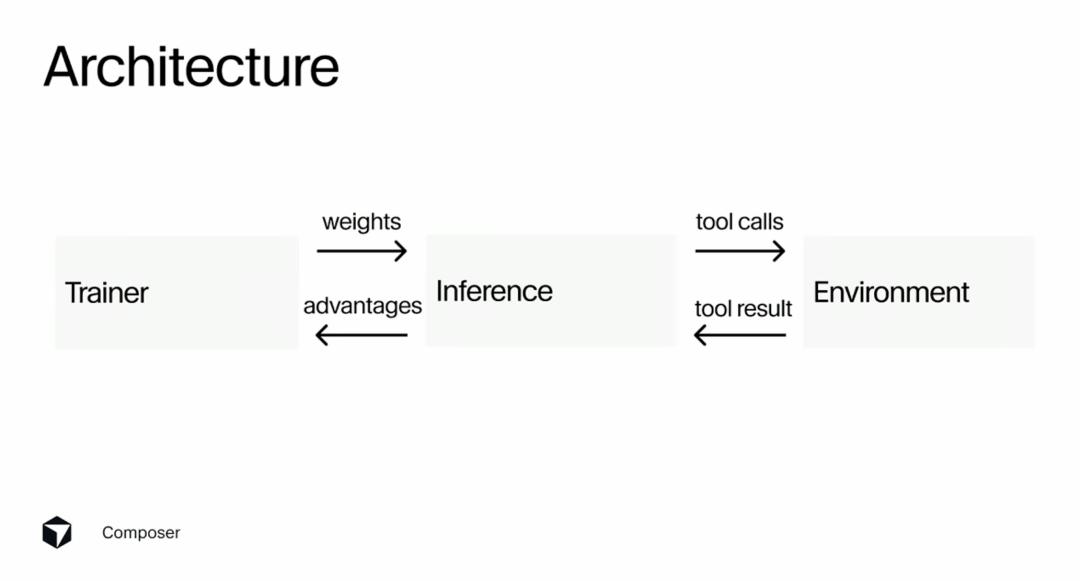

At a high level, they have three different servers: a trainer, an inference server, and an environment server. The trainer mainly uses PyTorch and resembles a standard machine learning stack scaled to a very large size. The inference server primarily uses Ray to orchestrate rollouts. The environment server uses microVMs to launch stateful versions of these environments, allowing them to make file changes, run terminal commands, and execute linters. You can think of this as running a mini version of Cursor. These three components need to interact with each other to form a complete training loop.

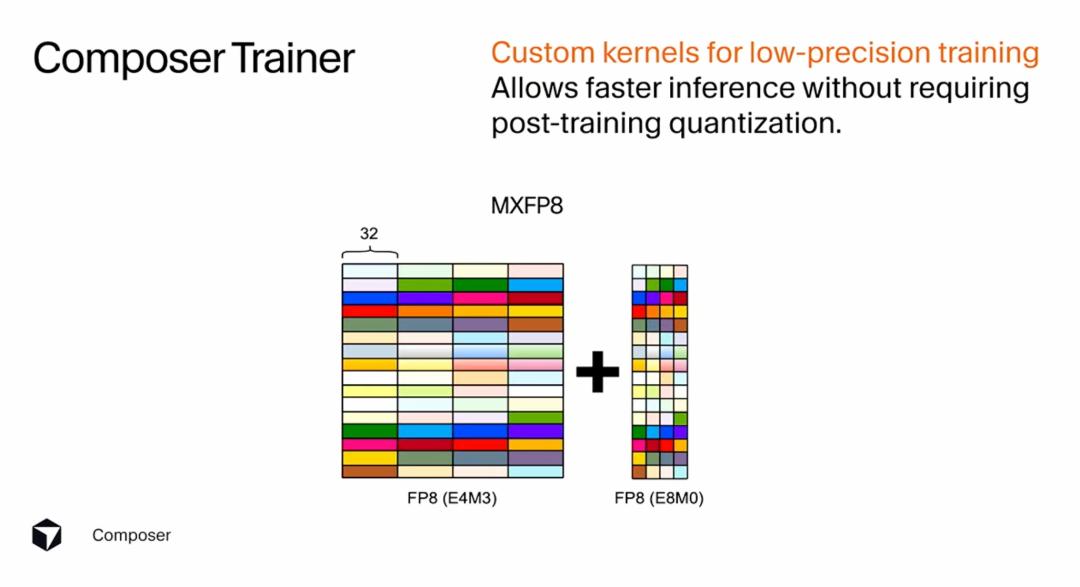

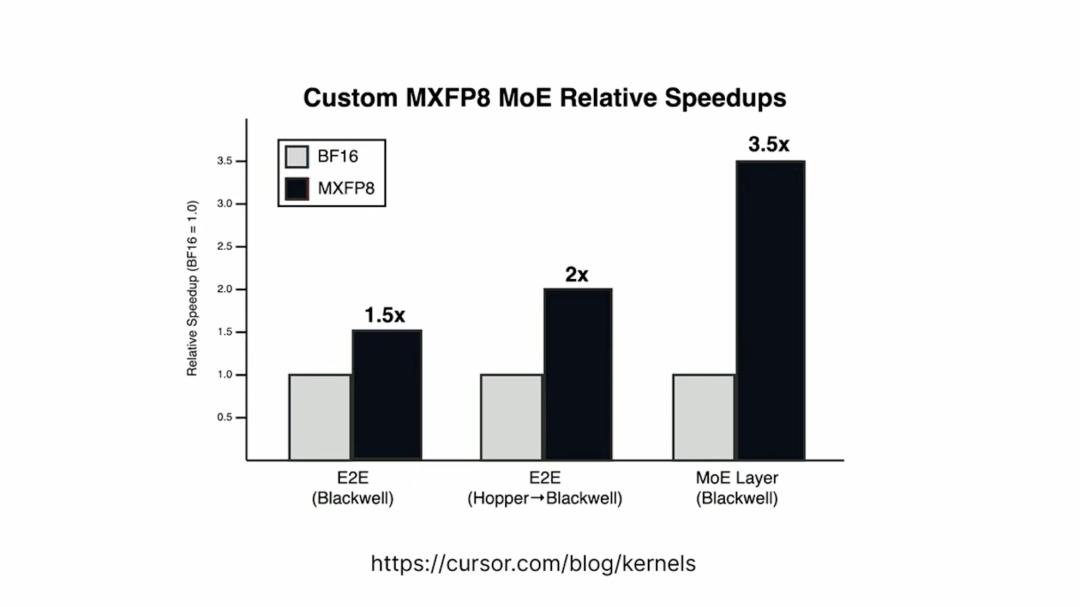

Regarding the trainer, they made a very interesting optimization: developing a custom kernel library that supports low-precision training. Low-precision training speeds up the training process and allows them to run sampling efficiently without needing any post-training quantization. They use a microscaling format called MXFP8. The idea is that they can work with FP8 precision but use an additional scaling factor to achieve better precision and higher quality training. Sasha Rush mentioned that they developed a custom kernel using this microscaling format for the latest NVIDIA architecture, which provides a 3.5x speedup on Blackwell chips for the mixture of experts layers.

I believe this focus on low-level optimization is crucial. Many AI teams may settle for using off-the-shelf training frameworks and standard precision, but the Cursor team chose to delve into kernel-level optimization. This investment not only brought significant speed improvements but also allowed them to train larger, more complex models while maintaining efficiency in both training and inference. This “refusal to settle” attitude is a common trait of top teams.

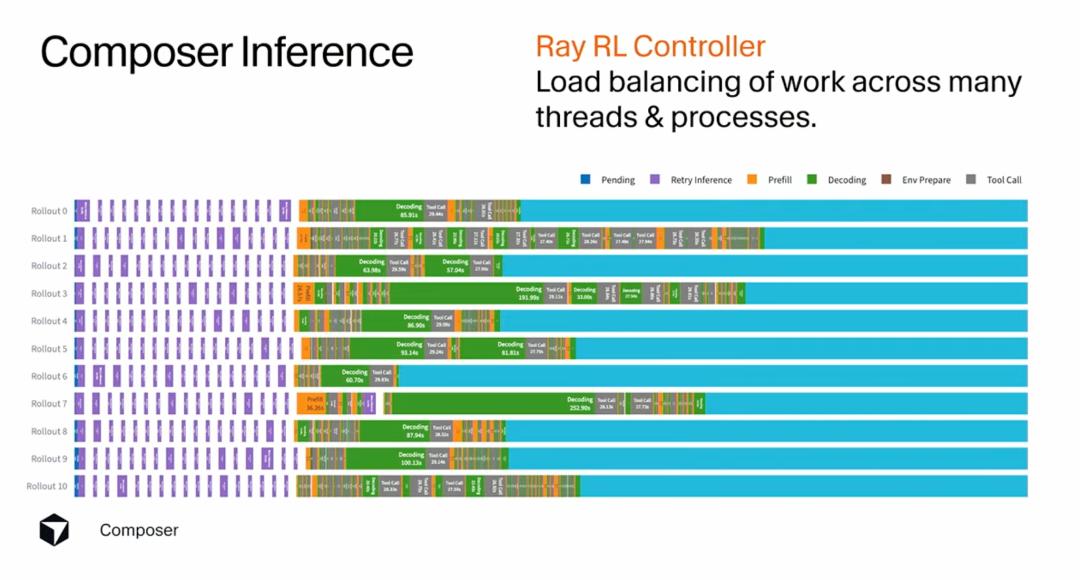

The inference server faces the primary challenge of stragglers (lagging processes). If you do not think through this process and just let the agent do its thing, you will encounter issues. This is because rollouts may call terminal commands or install entire libraries; they can do anything they want. So if you run ten rollouts, they may return at different times. They solved this problem using Ray and a single controller interface, allowing them to load balance across many different threads and processes, making this part of the process efficient.

I find this problem particularly illustrative of the complexity of real-world AI systems. Ideally, all rollouts should take roughly the same amount of time, but in reality, they can vary significantly. Some may only need to read a few files to complete, while others may require running complex build processes. If you cannot effectively handle this heterogeneity, the entire training process will be dragged down by the slowest rollout, leading to wasted resources and inefficiency.

Perfect Integration with Production Environment: The Philosophy of Training as Product

Sasha Rush emphasized one point that impressed me: their goal is to train through the production of the Cursor product. One interesting aspect of Cursor is that they can design both the product itself and the machine learning training simultaneously. Fortunately, during the process of building the reinforcement learning stack, Cursor released a product called cloud agents. This allows you to use the agent offline, and Sasha Rush mentioned that he often uses it to check model performance while riding the subway. As part of this product, they launch virtual machines of user environments, allowing the agent to change code and run terminal commands. They can use the same infrastructure for reinforcement learning training.

This means they have a production agent server that is identical when running the cloud agent and during reinforcement learning training. I think this is a very clever design decision. Many companies completely separate training environments from production environments, leading to trained models performing below expectations in real products. But Cursor chose to keep them entirely consistent, allowing the model to learn how to perform better in the real product during training.

Of course, this also presents challenges. The workload during peak reinforcement learning training can be much more bursty than when running a standard product. So they must handle this burstiness when launching many environments for training, ensuring the product runs smoothly. Sasha Rush showcased a dashboard written with Composer that displays backend utilization. I find this detail interesting as it shows they have begun using the tools they built to improve their workflows.

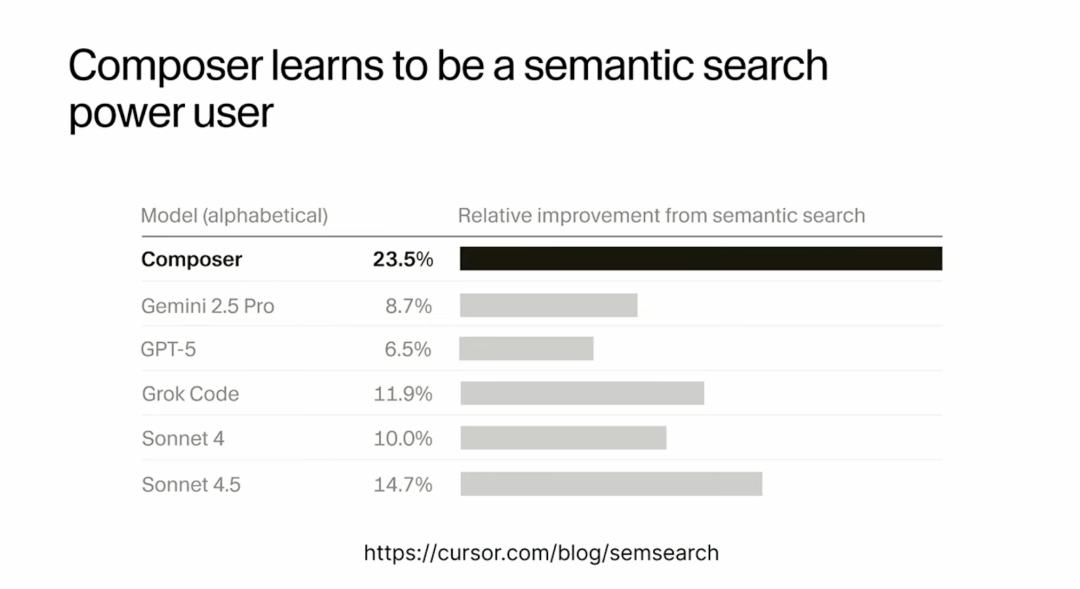

You may wonder why it is worth spending so much time actually using the real production environment. They could simulate all these different structures or try to mimic how it works. But Sasha Rush provided a compelling reason: they can introduce specific tools that they believe are very valuable for the agent. One of these is that they trained their embedding model for powerful semantic search. When you use Cursor, it indexes all your files, allowing the agent to use natural language queries to find files it might want to edit.

They found that this semantic search capability helps all the different agents used in Cursor, but it is particularly beneficial for Composer. This is because they can train the model to be an advanced user of this tool using the exact same model and structure as in production. This made me realize that AI tools not only need to be smart but also need to know how to effectively use the tools available to them. Just as a great developer knows not only programming languages but also how to use IDEs, debuggers, version control systems, and other tools, a great AI agent also needs to learn how to fully leverage its toolbox.

Observations One Week After Composer’s Release: RL Really Works

Sasha Rush shared some observations from the first week after Composer’s release, and this data deepened my understanding of the potential of reinforcement learning.

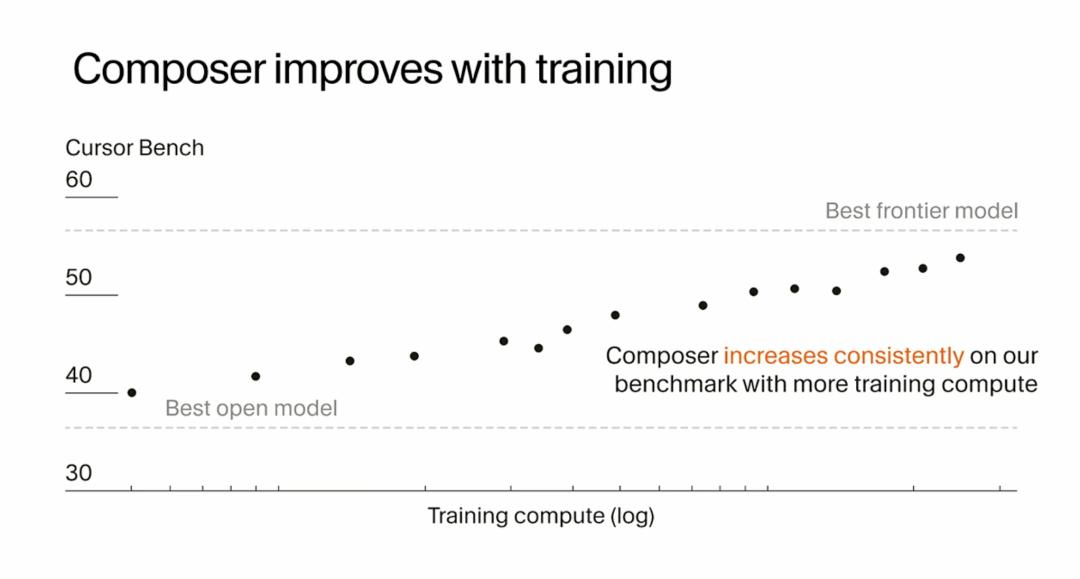

The main evidence that convinced them of the effectiveness of reinforcement learning is the improvement in model performance as they ran more steps of the rollout-check-update cycle. The model’s initial performance was roughly on par with the best open-source models in the field, but as training progressed, its performance on benchmarks steadily improved. The x-axis of this graph is on a logarithmic scale for computational effort, indicating that they invested significant computation during the reinforcement learning process. However, they saw gains associated with this computation, with model performance rising to the level of their released version.

I see this as a very good signal for the scalability of reinforcement learning, particularly its ability to extend to challenging specialized tasks. Many people question whether reinforcement learning can work on complex real-world tasks, but Cursor’s experience shows that with sufficient computational resources and the right infrastructure, reinforcement learning can indeed bring models to the cutting edge in specific domains.

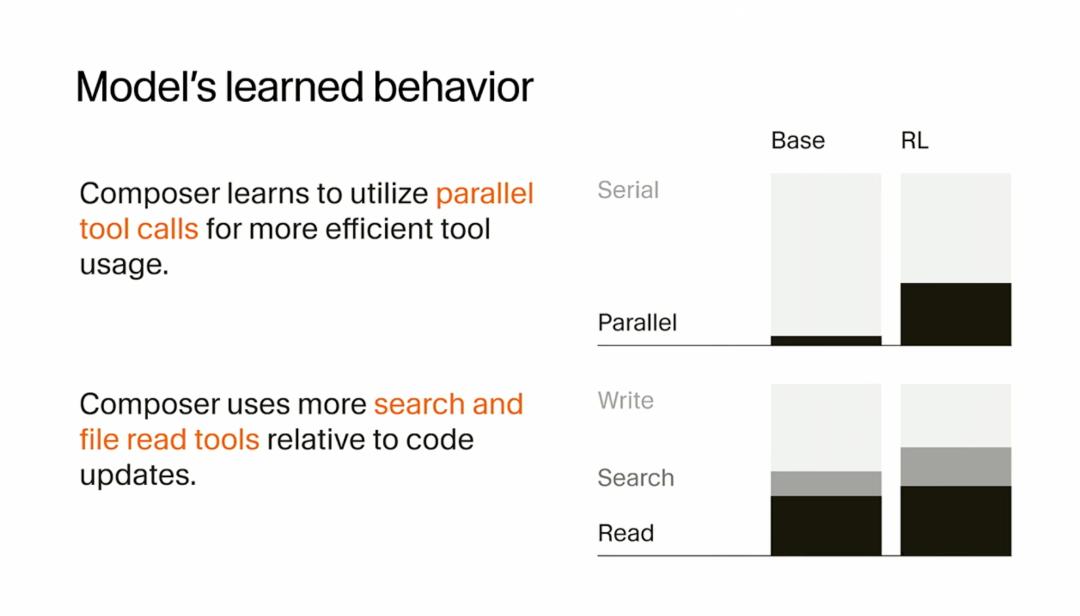

They also found that they could train the model to behave in ways they deemed useful from a product perspective. Sasha Rush previously mentioned that they want the model to be fast not only in generating tokens but also in the end-to-end user experience. One key component is enabling the model to call parallel tools. As training progressed, the model was able to call more parallel tools and respond to user queries more quickly. They believe they can further push this in future training.

I find this discovery particularly valuable as it indicates that reinforcement learning can enhance not only a model’s “intelligence” but also shape its behavioral patterns. With appropriate reward design, you can teach the model to work more efficiently, such as by parallelizing tasks and prioritizing critical steps. This level of behavioral optimization is challenging for traditional supervised learning to achieve.

They also observed that the model learned better agent behaviors. Initially, it made too many edits and performed them without sufficient evidence. As training progressed, the model began to read more files and conduct more searches to find the correct editing locations and make appropriate changes. This reminds me that good programming is not just about writing code; it is also about understanding context, finding the right places, and making reasonable decisions. Composer learned these “soft skills” through reinforcement learning.

Perhaps most importantly, users seem to love it. They released Composer a week ago, and the primary feedback is that the combination of speed and intelligence unlocks a different way of programming. People are no longer starting an agent and then scrolling through Twitter while waiting for results; they quickly obtain results and move on to the next question. As a programmer and developer, this is genuinely exciting. Sasha Rush mentioned that many internal developers are now using it in their daily work. I believe this is the best validation of a product: the people who build the tools are using them every day.

My Thoughts on Building Specialized AI Models

After listening to Sasha Rush’s presentation, I have a few profound insights to share.

First, I believe reinforcement learning is indeed very suitable for building such specialized models. This is a paradigm shift we have seen in the development of large language models over the past few years. Reinforcement learning facilitates the creation of highly intelligent target models in specific customized domains. In the past, we always pursued universal models that could do everything, but Cursor’s experience indicates that models deeply optimized for specific tasks may perform much better than general models in those tasks. This makes me think that we may see more of these specialized models in the future: models specifically for data analysis, front-end development, system architecture, and each excelling in its own domain.

Another aspect that fascinates me is how AI systems have changed the process of research and development itself. Sasha Rush mentioned that he and many in the team now have their daily work assisted by the same agents they are building. They use these agents to build dashboards, backends, and various other things. This allows them to move quickly with a small team. I find this a very interesting bootstrap process: the AI tools you build not only serve users but also serve you, enabling you to improve the tool more rapidly. This positive feedback loop may accelerate the evolution of AI tools.

Finally, although Sasha Rush stated that he is not fundamentally an infrastructure expert, seeing how much reinforcement learning is driven by infrastructure development was an eye-opener for him. It is indeed challenging and requires integrating product, scale, and machine learning training. It truly touches on all aspects of modern software systems. I completely agree with this observation. In my view, future AI companies will need not only excellent machine learning researchers but also world-class infrastructure engineers. Companies that can effectively combine the two will have a significant competitive advantage.

From a broader perspective, the story of Cursor Composer has made me rethink how AI tools should be constructed. The traditional approach is to first train a general model and then fine-tune or prompt-engineer it to adapt to specific tasks. However, Cursor took a completely different path: designing the entire system from the ground up for a specific task (programming), including model architecture, training methods, infrastructure, and product integration. I believe this end-to-end thinking is the correct way to build genuinely useful AI tools.

I am also contemplating the limitations of this approach. Reinforcement learning requires substantial computational resources, complex infrastructure, and close integration of product and training. This means not every company can adopt this method. But for those with the resources and determination, this may be the best path to creating industry-leading AI products. Cursor has already proven this path is viable, and I believe we will see more companies follow suit.

Another question worth considering is what the future of these specialized models will look like. Cursor Composer focuses on programming, but can the same approach be applied to other fields? For instance, models specifically for data analysis, content creation, customer support, etc.? I believe the answer is yes, but each field will require its own infrastructure, tool ecosystem, and training methods. This is not an easy task, but for those who can achieve it, the rewards will be substantial.

Finally, I want to say that the success of Cursor Composer once again proves a principle: true innovation often does not come from following current trends but from deeply understanding user needs and going to great lengths to meet those needs. The Cursor team was not misled by the narrative that “bigger models are better”; instead, they focused on solving the real pain points of developers: how to make AI programming assistants both smart and fast. They achieved this goal through reinforcement learning, custom infrastructure, product integration, and various other means, ultimately delivering a product that users genuinely enjoy using. This user-centered, problem-oriented mindset is something all product developers should learn.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.